The AI Legal Revolution Has Begun

The ai lawsuits flooding courts nationwide represent more than disputes between tech giants and creative professionals. They’re reshaping how we think about data ownership, copyright protection, and the fundamental right to control our intellectual property in the age of artificial intelligence.

From Getty Images challenging Stability AI’s unauthorized use of millions of copyrighted photographs to The New York Times suing OpenAI for training ChatGPT on decades of journalism, these cases establish crucial precedents. The outcomes will determine whether AI companies can continue harvesting creative work without permission or compensation.

For individual creators, writers, photographers, and anyone who produces original content, these lawsuits matter immensely. They’re fighting battles that could protect your rights to control how your creative work gets used by AI systems.

Getty Images vs. Stability AI: The Visual Arts Battleground

Getty Images filed one of the most significant ai lawsuits in February 2023, targeting Stability AI for allegedly using over 12 million copyrighted images to train its Stable Diffusion model. The lawsuit centers on copyright infringement, trademark violations, and unfair competition claims.

Getty’s complaint reveals how Stability AI scraped images from across the internet, including Getty’s watermarked photographs, without licensing agreements. The lawsuit includes examples of AI-generated images containing distorted Getty watermarks, proving the unauthorized training data usage.

This case matters because it challenges the AI industry’s assumption that publicly available content equals free training data. Getty argues that commercial AI training requires proper licensing, just like any other commercial use of copyrighted material.

The legal arguments focus on whether AI training constitutes fair use under copyright law. Stability AI claims their use transforms the original works into something fundamentally different. Getty counters that this commercial exploitation undermines the market for licensed imagery.

Beyond monetary damages, Getty seeks injunctive relief to stop Stability AI from using copyrighted images without permission. This could force AI companies to rebuild training datasets using only licensed or public domain content.

New York Times vs. OpenAI: When Publishers Fight Back

The New York Times lawsuit against OpenAI and Microsoft, filed in December 2023, represents traditional media’s fight against AI training on journalistic content. The suit alleges that ChatGPT and other models were trained on millions of Times articles without authorization.

The Times presented evidence showing ChatGPT can reproduce substantial portions of their articles verbatim when prompted. This demonstrates that the AI model memorized, rather than merely learned patterns from, copyrighted journalism.

The lawsuit highlights economic harm arguments. The Times argues that AI chatbots providing their content for free reduces traffic to their website, undermining their subscription-based business model and advertising revenue.

OpenAI’s defense relies heavily on fair use doctrine, claiming that training AI models constitutes transformative use protected by copyright law. They argue that the purpose and character of AI training differs fundamentally from the original journalistic purpose.

The case could establish whether news organizations retain control over how their content gets used in AI training. A victory for the Times might force AI companies to negotiate licensing deals with publishers, similar to Google’s agreements with news outlets.

Author Class Actions: Books Without Permission

Multiple class action lawsuits filed by prominent authors including John Grisham, George R.R. Martin, and Jodi Picoult challenge AI companies for training models on copyrighted books. These ai lawsuits target Meta, OpenAI, and Anthropic for allegedly using pirated book collections.

The Authors Guild lawsuit against OpenAI alleges that the company used Books3 dataset, which contains over 180,000 copyrighted books scraped from piracy websites. The complaint argues that this massive copyright infringement enabled ChatGPT’s sophisticated language capabilities.

Authors demonstrate harm by showing AI models can generate text mimicking their distinctive writing styles, potentially competing with their original works. Some authors report finding their books’ exact passages reproduced by AI systems.

The legal strategy focuses on proving that AI training doesn’t qualify for fair use protection when it involves wholesale copying of entire works. Unlike search engines that index small portions of text, AI training ingests complete books.

These cases could force AI companies to obtain permission before training on published literature. The implications extend to academic papers, magazine articles, and any written content with copyright protection.

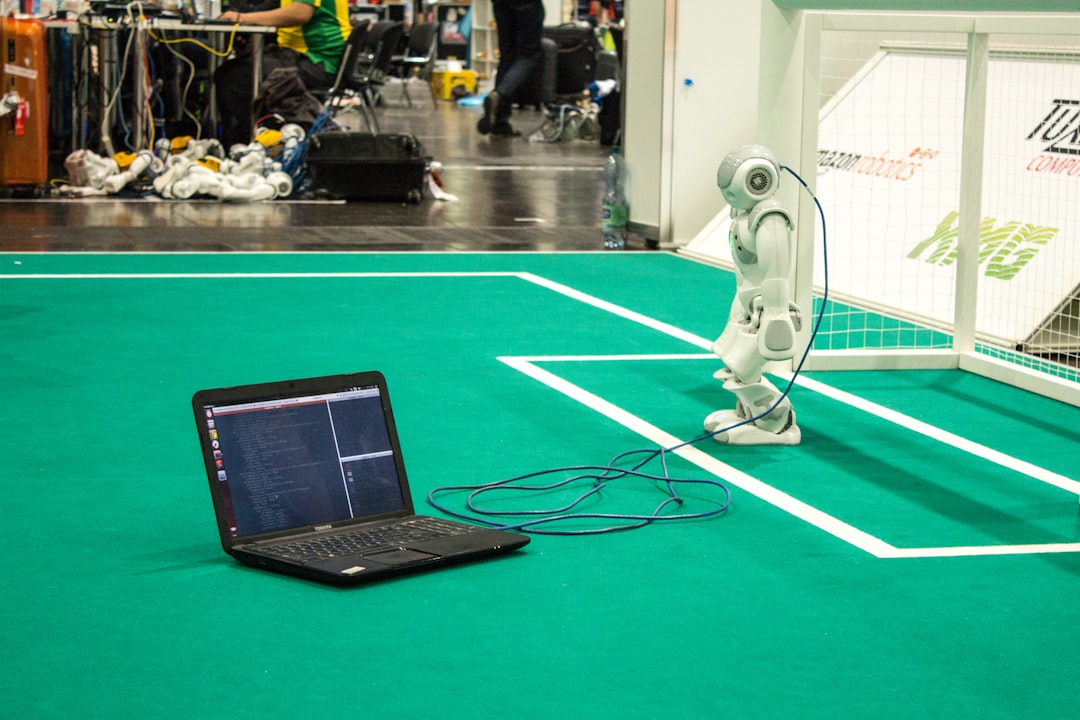

Photographers Join the Fight Against AI Training

Professional photographers have launched their own legal challenges against AI image generators. The lawsuit Andersen v. Stability AI represents thousands of photographers whose work allegedly trained AI models without consent.

Photographers argue that AI image generators directly compete with their services by producing images in similar styles. Unlike other creative fields, photography faces immediate economic displacement from AI-generated imagery.

The technical evidence shows AI models can generate images mimicking specific photographers’ lighting techniques, composition styles, and even signature elements. This demonstrates that training data included their copyrighted photographs.

Stock photography companies like Shutterstock initially faced photographer backlash for licensing images to AI companies. The controversy forced many platforms to revise their terms of service and create opt-out mechanisms for contributors.

These cases highlight how AI training can devalue entire professional categories. Photographers seek not just damages but industry-wide changes to how AI companies acquire training data.

What These Lawsuits Mean for Your Personal Data

While major ai lawsuits focus on professional creators, the implications extend to anyone who generates original content online. Your social media posts, blog articles, photographs, and creative works could be training AI models without your knowledge or consent.

The legal principles established in these cases will determine whether individuals have meaningful control over their digital creations. If courts rule that AI training requires permission, it could revolutionize how tech companies handle user-generated content.

Current AI training practices treat anything publicly posted as potential training data. This approach assumes that posting content online constitutes blanket permission for any commercial use, including AI training.

The lawsuits challenge this assumption by arguing that copyright law protects creative works regardless of where they’re published. Even posting a photograph on social media doesn’t surrender your exclusive rights to that image.

For individuals, these cases could establish the right to opt out of AI training or receive compensation when their content contributes to commercial AI development. The outcomes may determine whether future AI systems need explicit permission before using personal creative works.

Why Proving Data Ownership Matters Now

As ai lawsuits progress through courts, proving original ownership of creative works becomes increasingly crucial. Many creators struggle to demonstrate they created specific content before AI companies scraped it for training purposes.

Traditional copyright registration provides some protection, but the process is expensive and time-consuming for individual creators. Most people don’t register every photograph, article, or creative work they produce.

The Personal Data Asset Origination System (PDAOS™) concept addresses this challenge by creating immutable proof of data origination. Learn more about this approach in the PDAOS white paper.

MyDataKey™ certificates provide timestamped proof that you owned specific data at a particular moment. This evidence could prove crucial in future litigation against AI companies that used your content without permission.

Own Your Data Inc, the nonprofit organization behind MyDataKey™, believes individuals deserve meaningful control over their digital creations and the ability to prove ownership when their rights are violated.

The current legal battles demonstrate why establishing data ownership before disputes arise makes strategic sense. Waiting until after AI companies use your content makes proving original ownership much more difficult.

How to Protect Your Creative Work Today

While major ai lawsuits work through the courts, individual creators can take immediate steps to protect their rights and establish ownership of their digital works.

Start by documenting your creative process. Save drafts, sketches, source files, and any materials that demonstrate original creation. These records could prove invaluable if you need to establish ownership later.

Consider registering important works with the U.S. Copyright Office, especially if they have commercial value. Formal registration provides strong legal protection and enables statutory damages in infringement cases.

Use metadata and digital watermarks to embed ownership information in your files. While this won’t stop AI training, it creates additional evidence of your ownership claims.

Establish clear licensing terms for your work. Creative Commons licenses or custom agreements can specify how others may use your content and whether AI training is permitted.

Create timestamped proof of ownership using systems like MyDataKey™ that provide independent verification of when you possessed specific data. Get your certificate today to start building evidence of your digital asset ownership.

Monitor how your content appears online and document unauthorized uses. Screenshot AI-generated content that mimics your style or reproduces your work without permission.

Stay informed about data broker opt-out options and AI training exclusion mechanisms as they become available. Many companies are developing tools to help creators control how their work gets used.

The ongoing legal battles between creative professionals and AI companies will shape digital rights for generations. By understanding these cases and taking proactive steps to protect your work, you’re preparing for a future where proving data ownership could mean the difference between compensation and exploitation.